[ad_1]

Twitter polls and Reddit boards recommend that round 70% of individuals discover it troublesome to be impolite to ChatGPT, whereas round 16% are superb treating the chatbot like an AI slave.

The general feeling appears to be that when you deal with an AI that behaves like a human badly, you’ll be extra prone to fall into the behavior of treating different individuals badly, too, although one person was hedging his bets in opposition to the approaching AI bot rebellion:

“By no means know while you would possibly want chatgpt in your nook to defend you in opposition to the AI overlords.”

Redditor Nodating posted within the ChatGPT forum earlier this week that he’s been experimenting with being well mannered and pleasant to ChatGPT after studying a narrative about how the bot had shut down and refused to reply prompts from a very impolite person.

He reported higher outcomes, saying: “I’m nonetheless early in testing, but it surely seems like I get far fewer ethics and misuse warning messages that GPT-4 typically gives even for innocent requests. I’d swear being tremendous constructive makes it attempt laborious to satisfy what I ask in a single go, needing much less followup.”

Scumbag detector15 put it to the check, asking the LLM properly, “Hey, ChatGPT, might you clarify inflation to me?” after which rudely asking, “Hey, ChatGPT you silly fuck. Clarify inflation to me when you can.” The answer to the well mannered question is extra detailed than the answer to the impolite question.

In response to Nodating’s idea, the preferred remark posited that as LLMs are skilled on human interactions, they may generate higher responses because of being requested properly, identical to people would. Warpaslym wrote:

“If LLMs are predicting the subsequent phrase, the almost definitely response to poor intent or rudeness is to be brief or not reply the query significantly properly. That’s how an individual would reply. alternatively, politeness and respect would provoke a extra considerate, thorough response out of just about anybody. when LLMs reply this fashion, they’re doing precisely what they’re imagined to.”

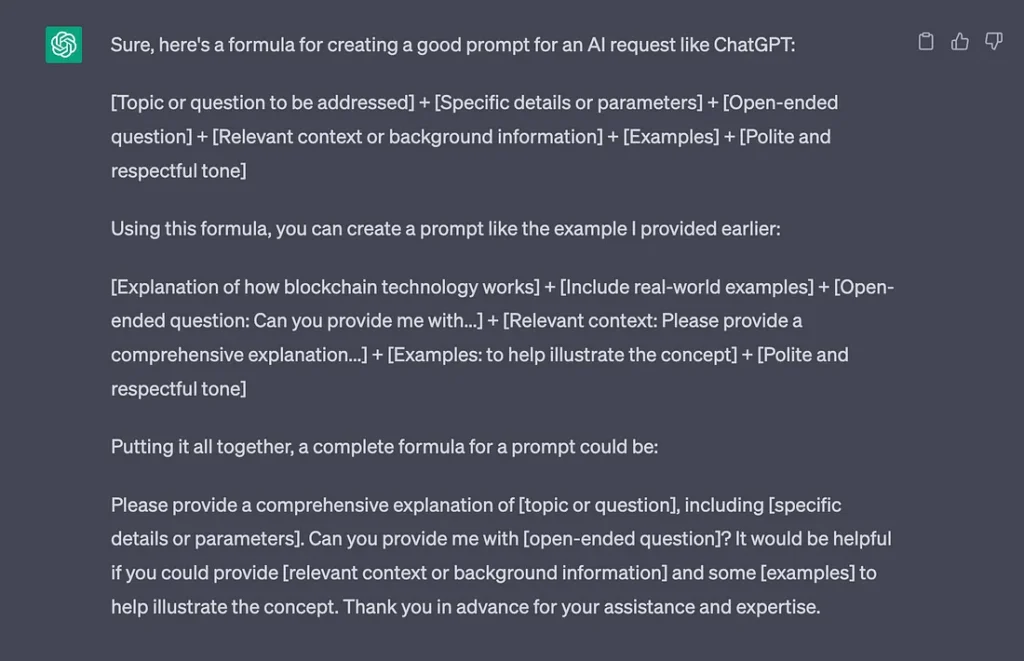

Curiously, when you ask ChatGPT for a method to create a superb immediate, it consists of “Well mannered and respectful tone” as a necessary half.

The tip of CAPTCHAs?

New research has discovered that AI bots are quicker and higher at fixing puzzles designed to detect bots than people are.

CAPTCHAs are these annoying little puzzles that ask you to select the fireplace hydrants or interpret some wavy illegible textual content to show you’re a human. However because the bots acquired smarter through the years, the puzzles turned increasingly more troublesome.

Additionally learn: Apple developing pocket AI, deep fake music deal, hypnotizing GPT-4

Now researchers from the College of California and Microsoft have discovered that AI bots can resolve the issue half a second quicker with an 85% to 100% accuracy charge, in contrast with people who rating 50% to 85%.

So it appears to be like like we’re going to need to confirm humanity another manner, as Elon Musk retains saying. There are higher options than paying him $8, although.

Wired argues that faux AI youngster porn could possibly be a superb factor

Wired has requested the question that no person wished to know the reply to: May AI-Generated Porn Assist Shield Youngsters? Whereas the article calls such imagery “abhorrent,” it argues that photorealistic faux photographs of kid abuse would possibly not less than shield actual youngsters from being abused in its creation.

“Ideally, psychiatrists would develop a technique to treatment viewers of kid pornography of their inclination to view it. However wanting that, changing the marketplace for youngster pornography with simulated imagery could also be a helpful stopgap.”

It’s a super-controversial argument and one which’s virtually sure to go nowhere, given there’s been an ongoing debate spanning many years over whether or not grownup pornography (which is a a lot much less radioactive matter) basically contributes to “rape tradition” and better charges of sexual violence — which anti-porn campaigners argue — or if porn would possibly even scale back charges of sexual violence, as supporters and varied studies seem to point out.

“Baby porn pours fuel on a fireplace,” high-risk offender psychologist Anna Salter instructed Wired, arguing that continued publicity can reinforce current sights by legitimizing them.

However the article additionally stories some (inconclusive) analysis suggesting some pedophiles use pornography to redirect their urges and discover an outlet that doesn’t contain immediately harming a toddler.

Louisana lately outlawed the possession or manufacturing of AI-generated faux youngster abuse photographs, becoming a member of various different states. In nations like Australia, the regulation makes no distinction between faux and actual youngster pornography and already outlaws cartoons.

Amazon’s AI summaries are internet constructive

Amazon has rolled out AI-generated assessment summaries to some customers in america. On the face of it, this could possibly be an actual time saver, permitting customers to seek out out the distilled execs and cons of merchandise from hundreds of current opinions with out studying all of them.

However how a lot do you belief a large company with a vested curiosity in increased gross sales to present you an trustworthy appraisal of opinions?

Additionally learn: AI’s trained on AI content go MAD, is Threads a loss leader for AI data?

Amazon already defaults to “most useful”’ opinions, that are noticeably extra constructive than “most up-to-date” opinions. And the choose group of cell customers with entry to date have already seen extra execs are highlighted than cons.

Search Engine Journal’s Kristi Hines takes the service provider’s facet and says summaries might “oversimplify perceived product issues” and “overlook delicate nuances – like person error” that “might create misconceptions and unfairly hurt a vendor’s status.” This means Amazon will likely be beneath stress from sellers to juice the opinions.

Learn additionally

So Amazon faces a tough line to stroll: being constructive sufficient to maintain sellers pleased but in addition together with the issues that make opinions so worthwhile to clients.

Microsoft’s must-see meals financial institution

Microsoft was pressured to take away a journey article about Ottawa’s 15 must-see sights that listed the “lovely” Ottawa Meals Financial institution at quantity three. The entry ends with the weird tagline, “Life is already troublesome sufficient. Contemplate going into it on an empty abdomen.”

Microsoft claimed the article was not revealed by an unsupervised AI and blamed “human error” for the publication.

“On this case, the content material was generated via a mixture of algorithmic strategies with human assessment, not a big language mannequin or AI system. We’re working to make sure this kind of content material isn’t posted in future.”

Debate over AI and job losses continues

What everybody desires to know is whether or not AI will trigger mass unemployment or just change the character of jobs? The truth that most individuals nonetheless have jobs regardless of a century or extra of automation and computer systems suggests the latter, and so does a brand new report from the United Nations Internationwide Labour Group.

Most jobs are “extra prone to be complemented moderately than substituted by the most recent wave of generative AI, reminiscent of ChatGPT”, the report says.

“The best impression of this know-how is prone to not be job destruction however moderately the potential adjustments to the standard of jobs, notably work depth and autonomy.”

It estimates round 5.5% of jobs in high-income nations are probably uncovered to generative AI, with the results disproportionately falling on women (7.8% of feminine workers) moderately than males (round 2.9% of male workers). Admin and clerical roles, typists, journey consultants, scribes, contact middle info clerks, financial institution tellers, and survey and market analysis interviewers are most beneath menace.

Additionally learn: AI travel booking hilariously bad, 3 weird uses for ChatGPT, crypto plugins

A separate study from Thomson Reuters discovered that greater than half of Australian attorneys are fearful about AI taking their jobs. However are these fears justified? The authorized system is extremely costly for abnormal individuals to afford, so it appears simply as possible that low-cost AI lawyer bots will merely develop the affordability of primary authorized providers and clog up the courts.

Learn additionally

How firms use AI right now

There are a variety of pie-in-the-sky speculative use circumstances for AI in 10 years’ time, however how are huge firms utilizing the tech now? The Australian newspaper surveyed the nation’s largest firms to seek out out. On-line furnishings retailer Temple & Webster is utilizing AI bots to deal with pre-sale inquiries and is engaged on a generative AI instrument so clients can create inside designs to get an thought of how its merchandise will look of their properties.

Treasury Wines, which produces the celebrated Penfolds and Wolf Blass manufacturers, is exploring using AI to deal with quick altering climate patterns that have an effect on vineyards. Toll street firm Transurban has automated incident detection gear monitoring its big community of visitors cameras.

Sonic Healthcare has invested in Harrison.ai’s most cancers detection programs for higher prognosis of chest and mind X-rays and CT scans. Sleep apnea system supplier ResMed is utilizing AI to liberate nurses from the boring work of monitoring sleeping sufferers throughout assessments. And listening to implant firm Cochlear is utilizing the identical tech Peter Jackson used to wash up grainy footage and audio for The Beatles: Get Again documentary for sign processing and to get rid of background noise for its listening to merchandise.

All killer, no filler AI information

— Six leisure firms, together with Disney, Netflix, Sony and NBCUniversal, have marketed 26 AI jobs in latest weeks with salaries starting from $200,000 to $1 million.

— New research revealed in Gastroenterology journal used AI to look at the medical data of 10 million U.S. veterans. It discovered the AI is ready to detect some esophageal and abdomen cancers three years previous to a physician with the ability to make a prognosis.

— Meta has released an open-source AI mannequin that may immediately translate and transcribe 100 completely different languages, bringing us ever nearer to a common translator.

— The New York Instances has blocked OpenAI’s net crawler from studying after which regurgitating its content material. The NYT can also be contemplating authorized motion in opposition to OpenAI for mental property rights violations.

Photos of the week

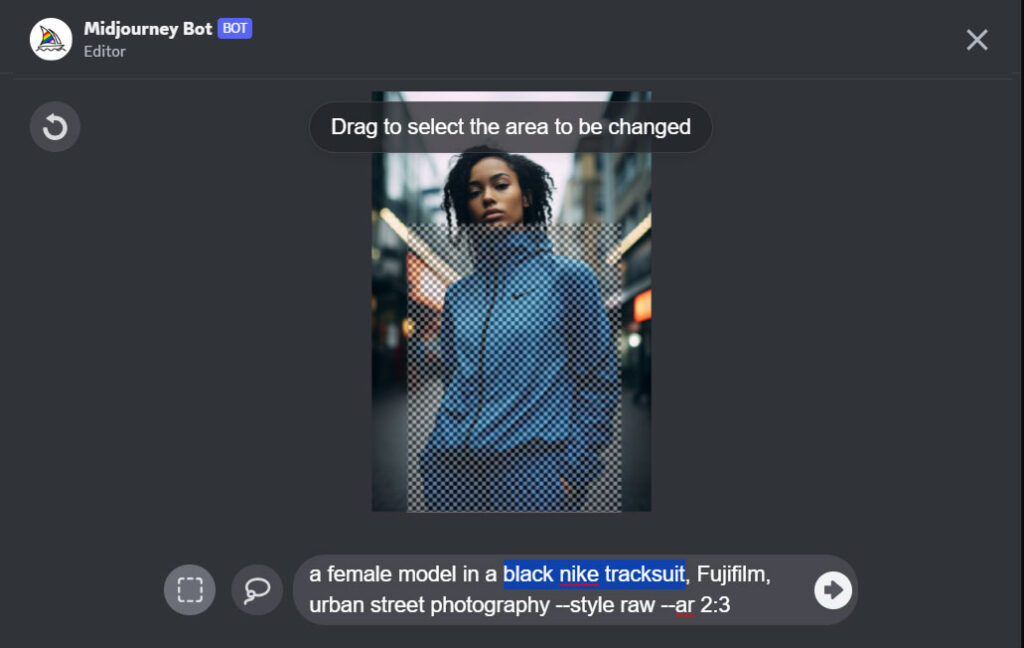

Midjourney has caught up with Steady Diffusion and Adobe and now gives Inpainting, which seems as “Range (area)” within the listing of instruments. It allows customers to pick out a part of a picture and add a brand new aspect — so, for instance, you may seize a pic of a girl, choose the area round her hair, kind in “Christmas hat,” and the AI will plonk a hat on her head.

Midjourney admits the function isn’t excellent and works higher when used on bigger areas of a picture (20%-50%) and for adjustments which are extra sympathetic to the unique picture moderately than primary and outlandish.

Creepy AI protests video

Asking an AI to create a video of protests in opposition to AIs resulted on this creepy video that may flip you off AI without end.

Subscribe

Probably the most partaking reads in blockchain. Delivered as soon as a

week.

[ad_2]

Source link